Aria EarthMOOG | Artify the Earth

Awards & Nominations

Aria EarthMOOG has received the following awards and nominations. Way to go!

The Challenge | Artify the Earth

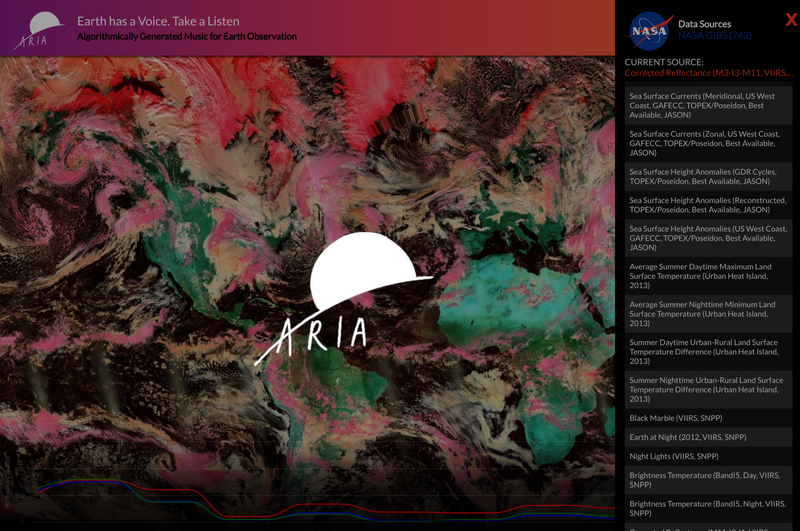

Aria: Earth has a Voice. Take a Listen.

Algorithmically generated music from Earth Observation data synthesizer

Team Introduction

We’re team “ARIA” - built and created by Marlene Guzman, Jen Watanabe, Melissa Ugas, Orestis Herodotou.

We all live in California, USA. Read our bios at the end of this page!

The Challenge

Artify the Earth!We hope to widen the audience and engage people of all ages to take another look at how science works and create new insights from Earth Observation datasets!

Our Solution

Aria | The Earth has a Voice. Take a Listen.

Aria is a uniquely interactive map experience accessible to people of all ages. With Aria, anyone can explore and engage with science and the Earth. Our acoustic and visual maps generate music using satellite and remote sensing data that allow you to interact with your world through sound, sight, and touch.

Our passion for the Planet, technology, data, and music inspired us to blend remote sensing data, color and sound theory, + maps together into one experience. This way maps go from a utility to an immersive and innovative STEAM-based artistic representation of the Earth!

Our Idea

It’s natural to wonder about the Earth and Beyond. Satellite maps and remote sensing data help us visualize our planet, but sometimes it’s hard to interpret without being an expert. We add a unique dimension to this data, a more natural and engaging way to interact with it, learn from it, and even discover new insights.

What does the image of an island or storm sound like on map?

What can you tell from a song whose notes reflect changes in vegetation cover, or temperature values?

We algorithmically generate music and sounds from data in real time while visualizing the results. The audible and visual melodies are created by the user as they interact with the maps, activating all your senses as you explore the earth in this visceral and unique way. We believe data is beautiful, and we set out to create a new way to experience it!

Why it’s Important

Creating charismatic data through Aria’s dynamic soundscapes draws in audiences of all kinds, from art and science enthusiasts to adults and children alike. Exploring data with Aria is a fun, exciting, and engaging new way to learn about the science behind the data, and we hope to get everyone excited about earth sciences, the environment, and the work NASA does to help us understand our place in the world!

For families, we picture Aria being used to aid in parent child bonding experiences. Parents can sit down with their children and explain locations, or maps while having fun. Aria also creates new interactions with data for those that may not rely on traditional ways of experiencing the world, like people with visual disabilities, or hearing.

How it works

As a user pans and zooms around the interactive web map, data populated from the NASA Global Imagery Browse Services dataset (Hundreds of amazing maps!) is used to determine what musical notes and sounds are played. We use the color values of the web map tile images sent through the web browser, as well as individual pixel RGB (Red, Green, Blue) values in order to generate unique sounds and melodies. We calculate various properties of the color values, for example luminance, and translate them to notes on a musical scale. We also process the images to extract the most prominent colors in the scenes, and look at how much variation there is. This creates a musical experience that lets us hear the data from a new and unexpected perspective!

We already have over 700 datasets available in the app from GIBS, and the underlying technology we use makes it easy to continue adding new data.

Technologies we used:

- Datasets: Geospatial and remote sensing datasets from NASA Global Imagery Browse Services (GIBS) services, REST API’s

- Concepts: Color theory, music theory, data visualization best practices, remote sensing data formats and instruments, electromagnetic spectrum, physics of light and sound, synthesizers!!

- Tools: Version control and collaborative coding, Web mapping, Advanced web development with modern ES6 JavaScript syntax, code quality and automation,

- Open source libraries: OpenLayers, ToneJS, Tonal, Vibrant, ReactJS, Webpack, Chroma

What’s Next?

The Aria experience of the future has no bounds, and we’re so excited to continue working on this project! With endless data sets available, we will continue to add new layers of Earth observation data to translate into sound through your own personal touch. The Aria team plans on introducing a variety of unique new interactions with maps and datasets, including links to relevant information and learning resources for datasets, and even the ability to "Remix" multiple datasets together!

We’d love to work to improve our algorithms, and even explore using deep learning to create more interesting sounds. We’ll also be adding new technologies like augmented reality simulation that will let you control the sonic sounds of the earth.

Our team also plans to expand to the cosmos - integrating solar system and star maps, and we’d love to launch a new version called “Aria: Exploring a Universe of Wonder” and use radiotelescopy data and astronomy images like those from the Hubble Space Telescope.

AR on your phone demo!

Immersive projection demo inside a geodesic dome!

We have already experimented with using Augmented Reality/Virtual Reality formats that respond to motion, as well as large format installations where we can create immersive and collaborative environments with 360 projection mapping and proximity sensors and gesture recognition.

Finally as we continue to harness support for this project we’d love to take this dynamic experience and make our app even further accessible to people with visual or aural disabilities.

What we learned and challenges we faced

Most of us were very new to the broad world of remote sensing and geospatial data, so learning about map projections and raster data fascinated us while we coded. In order to generate sound, we had to dive into some music theory, learning about pitch, harmonies, dissonance, scales, and the mathematical relationships or ‘distances’ between different sounds.

We even touched on some of the physics behind how sound works! We watched videos online about how musical notes relate to frequency and how to express them as hertz! Since we used color values to represent the data, we also needed to read up on some color theory, and understand the components of what makes up color, but also how these colors relate to the electromagnetic spectrum and how they are used to represent datasets in a such different ways.

All the while, we still had to code our project! So that posed a new set of challenges for us as we leveraged new libraries and techniques, while working with new tools required by collaborative coding.

We discovered so many connections that exist between our often segregated technologies; How important it is to re-conceptualize data and incorporate useful and fun tools to reach a wide audience. Using EO data and open source technologies we were able to create something artistically unique with vast potential for growth!

Who we are

MARLENE GUZMAN

#Dreamer, Life Hacker, Bio hacker, (computer hacker) and self-taught software engineer.

Advocate for under-served youth, animals and nature. I am passionate about helping others and providing solutions. I am a student of life. When I'm not out hacking, You can find me moving around to the rhythm of salsa or gardening. My goal in life: I strive to be the person my dog thinks I am.

JENNIFER WATANABE

Background in the arts. Currently web developer. Excited to merge science and art.

Melissa Ugas

Technologist and creator. An advocate for human rights, environmental justice, and immigrant rights. Other passions include indulging in local foodscapes, art+music, and expanding my collection of plants and succulentsOrestis Herodotou

I'm a life long learner that's passionate about the intersection of technology, education, environmentalism and I am fascinated with remote sensing and space exploration. When I'm not coding, you can find me sailing or enjoying our planet while backpacking in the mountains.

SpaceApps is a NASA incubator innovation program.